Will AI Replace QA Engineers? An Honest Answer for 2026

You open ChatGPT, type "Write Playwright tests for user login", and get working, production-ready test code in 10 seconds. GitHub Copilot autocompletes your entire test suite as you type. AI tools detect flaky tests, fix broken selectors, and generate edge cases you never thought of.

Question: If AI can do all this, what's left for QA engineers?

This isn't fear-mongering—it's a legitimate question as AI capabilities expand rapidly. But here's the reality after working with AI testing tools daily. For a full breakdown of the industry landscape, see our 2026 LLM Testing Buyers Guide:

AI won't replace QA engineers. It will eliminate 40% of current tasks and make the remaining 60% exponentially more valuable.

QA engineers who adapt will become Quality Strategists—professionals who leverage AI to test at scale while focusing on things machines can't do: understanding user needs, making strategic tradeoffs, and defining what quality actually means for your business.

This guide explores what's changing, what's staying, and how to position your career for the AI-powered future of quality assurance.

The AI Testing Evolution

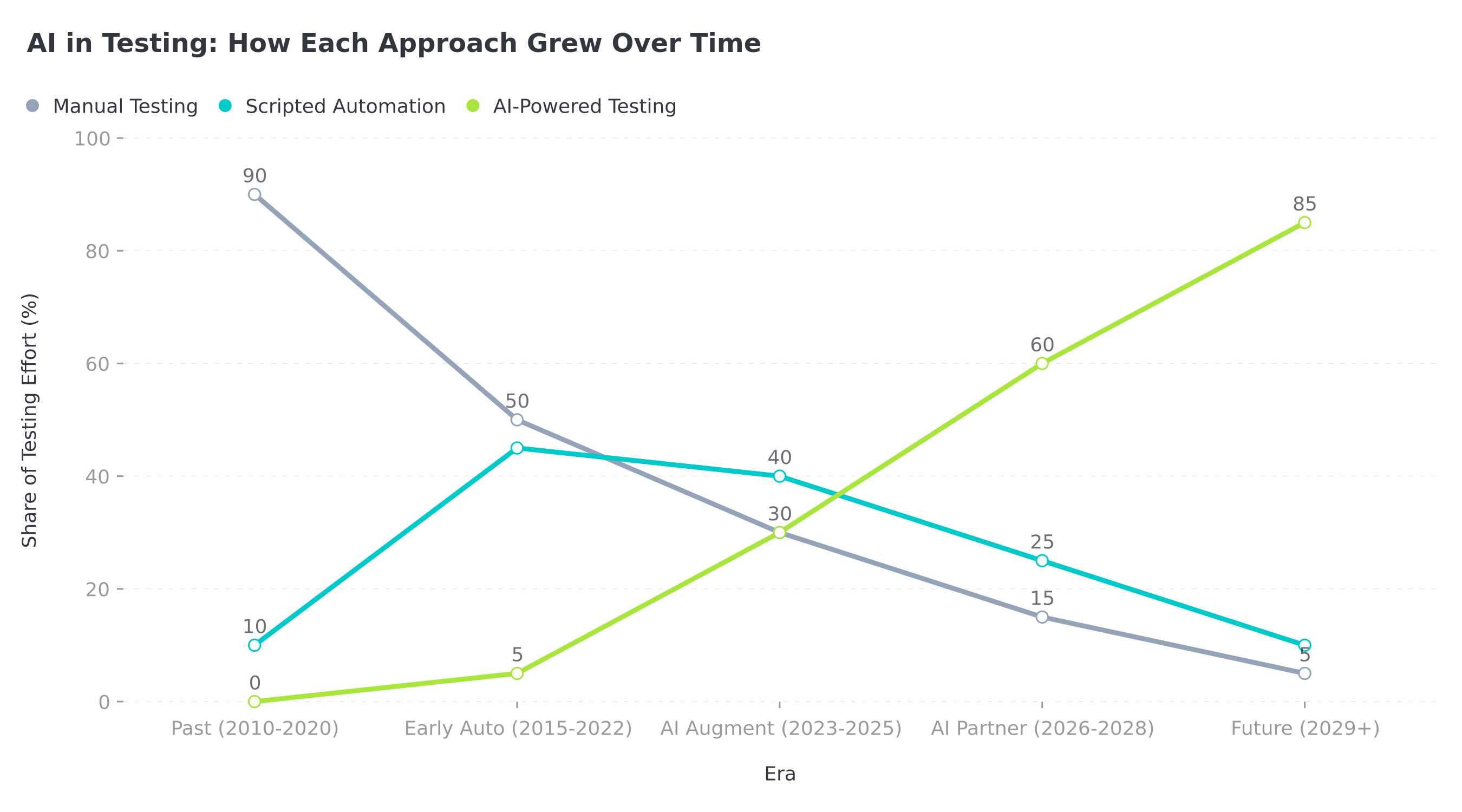

Share of testing effort (%) by approach across five eras. Manual Testing (grey) declines from 90% to near-zero; Scripted Automation (teal) peaks in the early automation era then fades; AI-Powered Testing (green) rises from 0% to 85% by 2029+.

What AI Is Already Automating

1. Test Code Generation

// What QA engineers wrote before AI (2020):

describe('Login flow', () => {

it('should allow user to log in with valid credentials', async () => {

await page.goto('https://app.example.com/login');

await page.fill('input[name="email"]', 'test@example.com');

await page.fill('input[name="password"]', 'Password123!');

await page.click('button[type="submit"]');

await expect(page.locator('.dashboard-header')).toBeVisible();

});

it('should show error for invalid password', async () => {

await page.goto('https://app.example.com/login');

await page.fill('input[name="email"]', 'test@example.com');

await page.fill('input[name="password"]', 'wrongpassword');

await page.click('button[type="submit"]');

await expect(page.locator('.error-message')).toContainText('Invalid credentials');

});

});

// What AI generates now (2026) from natural language:

// Prompt: "Write comprehensive login tests including validation, errors, SSO"

import { test, expect } from '@playwright/test';

test.describe('Complete Login Flow', () => {

test.beforeEach(async ({ page }) => {

await page.goto('/login');

});

test.describe('Valid Login Scenarios', () => {

test('standard email/password login succeeds', async ({ page }) => {

await page.getByLabel('Email').fill('test@example.com');

await page.getByLabel('Password').fill('SecurePass123!');

await page.getByRole('button', { name: 'Sign In' }).click();

await expect(page).toHaveURL('/dashboard');

await expect(page.getByText('Welcome back')).toBeVisible();

});

test('remember me persists session', async ({ page, context }) => {

await page.getByLabel('Email').fill('test@example.com');

await page.getByLabel('Password').fill('SecurePass123!');

await page.getByLabel('Remember me').check();

await page.getByRole('button', { name: 'Sign In' }).click();

const cookies = await context.cookies();

expect(cookies.find((c) => c.name === 'session_token')).toBeDefined();

expect(cookies.find((c) => c.name === 'session_token')?.expires).toBeGreaterThan(Date.now() / 1000 + 86400 * 30);

});

});

test.describe('SSO Integration', () => {

test('Google OAuth flow completes successfully', async ({ page, context }) => {

const [popup] = await Promise.all([

context.waitForEvent('page'),

page.getByRole('button', { name: 'Continue with Google' }).click(),

]);

await popup.waitForLoadState();

await expect(popup).toHaveURL(/accounts\.google\.com/);

// Mock OAuth flow for testing

await popup.evaluate(() => {

window.postMessage({ type: 'oauth_success', provider: 'google' }, '*');

});

await expect(page).toHaveURL('/dashboard');

});

});

test.describe('Validation & Error Handling', () => {

test('shows error for invalid email format', async ({ page }) => {

await page.getByLabel('Email').fill('notanemail');

await page.getByLabel('Password').fill('SecurePass123!');

await page.getByRole('button', { name: 'Sign In' }).click();

await expect(page.getByText('Please enter a valid email')).toBeVisible();

});

test('shows error for weak password', async ({ page }) => {

await page.getByLabel('Email').fill('test@example.com');

await page.getByLabel('Password').fill('123');

await page.getByRole('button', { name: 'Sign In' }).click();

await expect(page.getByText(/password must be at least/i)).toBeVisible();

});

test('rate limits after 5 failed attempts', async ({ page }) => {

for (let i = 0; i < 5; i++) {

await page.getByLabel('Email').fill('test@example.com');

await page.getByLabel('Password').fill('wrongpass');

await page.getByRole('button', { name: 'Sign In' }).click();

await page.waitForTimeout(100);

}

await expect(page.getByText('Too many login attempts')).toBeVisible();

await expect(page.getByRole('button', { name: 'Sign In' })).toBeDisabled();

});

});

test.describe('Accessibility', () => {

test('login form is keyboard navigable', async ({ page }) => {

await page.keyboard.press('Tab');

await expect(page.getByLabel('Email')).toBeFocused();

await page.keyboard.press('Tab');

await expect(page.getByLabel('Password')).toBeFocused();

await page.keyboard.press('Tab');

await expect(page.getByRole('button', { name: 'Sign In' })).toBeFocused();

});

test('has proper ARIA labels', async ({ page }) => {

const emailInput = page.getByLabel('Email');

await expect(emailInput).toHaveAttribute('aria-required', 'true');

const passwordInput = page.getByLabel('Password');

await expect(passwordInput).toHaveAttribute('type', 'password');

});

});

});

// AI generated 15+ tests in seconds vs hours of manual writing

Impact: AI reduces test writing time by 70-80%, but someone still needs to decide what to test.

2. Flaky Test Detection

# AI-powered flaky test detection config

# ai-test-analyzer.yml

flaky_detection:

enabled: true

analysis_window: 30d

min_runs: 10

patterns:

- name: 'Timing Issues'

indicators:

- 'setTimeout'

- 'waitForTimeout'

- 'sleep'

suggestion: 'Replace with waitForSelector or waitForCondition'

- name: 'Network Dependency'

indicators:

- 'fetch'

- 'axios'

- 'http.get'

suggestion: 'Mock external APIs or use API fixtures'

- name: 'Animation Race Condition'

indicators:

- 'click() immediately after page.goto()'

- 'fill() without waitForLoadState'

suggestion: 'Wait for element to be stable before interaction'

auto_fix:

enabled: true

strategies:

- 'Add explicit waits'

- 'Increase timeout for known slow operations'

- 'Retry on specific error patterns'

reporting:

slack_webhook: '${SLACK_WEBHOOK_URL}'

create_jira_ticket: true

assign_to: '@qa-team'

Impact: Flaky test detection that took hours of manual analysis now happens automatically.

What AI Cannot Replace (Yet)

| Task | AI Capability (2026) | Human Still Required |

|---|---|---|

| Write test code | Excellent (90%) | Review & edge cases |

| Find UI bugs | Good (70%) | Subtle UX issues |

| Performance regression | Excellent (95%) | Interpreting impact |

| Security vulnerabilities | Good (60%) | Business logic flaws |

| Understanding user needs | Poor (20%) | ✅ QA expertise |

| Strategic test planning | Fair (40%) | ✅ QA expertise |

| Prioritizing what to test | Fair (35%) | ✅ QA expertise |

| Defining quality standards | Poor (10%) | ✅ QA expertise |

| Business risk assessment | Poor (15%) | ✅ QA expertise |

| Cross-team collaboration | None (0%) | ✅ QA expertise |

The Evolving QA Role

Before AI (2020): Test Execution Focus

pie title QA Time Allocation (2020)

"Writing Tests" : 35

"Executing Manual Tests" : 30

"Bug Reporting" : 15

"Test Maintenance" : 10

"Strategy & Planning" : 5

"Collaboration" : 5

With AI (2026): Strategy & Quality Focus

pie title QA Time Allocation (2026)

"Strategy & Planning" : 30

"Quality Architecture" : 20

"AI Tool Oversight" : 15

"Risk Assessment" : 15

"Collaboration" : 10

"Writing Tests" : 5

"Manual Exploratory Testing" : 5

Skills That Matter More Than Ever

1. Quality Strategy

// QA Engineer as Quality Strategist

interface QualityStrategy {

objectives: string[];

testingApproach: {

automated: string[]; // What AI handles

manual: string[]; // What humans do best

exploratory: string[];

};

riskAssessment: {

high: string[];

medium: string[];

low: string[];

};

successMetrics: {

coverage: number;

bugEscapeRate: number;

deploymentFrequency: number;

};

}

class QualityStrategist {

defineStrategy(product: Product): QualityStrategy {

// AI can't make these strategic decisions

return {

objectives: ['Zero critical bugs in production', '95% automated test coverage', 'Deploy 3x/week safely'],

testingApproach: {

automated: [

'API contract tests (AI-generated)',

'Regression suite (self-healing)',

'Performance benchmarks (ML anomaly detection)',

],

manual: ['New feature exploratory testing', 'UX validation', 'Edge case discovery'],

exploratory: ['Feature interaction testing', 'User journey validation', 'Accessibility review'],

},

riskAssessment: {

high: ['Payment processing', 'Auth system', 'Data exports'],

medium: ['Notifications', 'Search', 'File uploads'],

low: ['UI polish', 'Analytics', 'Help text'],

},

successMetrics: {

coverage: 0.9,

bugEscapeRate: 0.02,

deploymentFrequency: 3,

},

};

}

prioritizeTestEffort(features: Feature[]): Feature[] {

// AI suggests priorities, human makes final call based on business context

return features

.map((f) => ({

...f,

testPriority: this.calculatePriority(f),

businessImpact: this.assessBusinessImpact(f),

technicalRisk: this.assessTechnicalRisk(f),

}))

.sort((a, b) => b.testPriority - a.testPriority);

}

private calculatePriority(feature: Feature): number {

// Business context AI doesn't have

const userCount = feature.affectedUsers;

const revenue = feature.revenueImpact;

const complexity = feature.technicalComplexity;

const regulatory = feature.hasRegulatoryRequirements ? 2 : 1;

return (userCount * 0.3 + revenue * 0.4 + complexity * 0.2) * regulatory;

}

}

2. Understanding User Experience

# AI can detect technical bugs, but not UX problems

# AI detects:

✅ Button is clickable

✅ Form submits successfully

✅ Page loads in 2.3s

# Human QA detects:

❓ Button label is confusing

❓ Form validation errors are unclear

❓ Page feels slow despite 2.3s load time (perceived performance)

❓ Color contrast makes text hard to read

❓ Workflow requires too many steps

3. Business Context & Risk Assessment

// Example: Release decision only humans can make

interface ReleaseDecision {

goNoGo: 'GO' | 'NO_GO';

reasoning: string;

mitigations: string[];

}

function makeReleaseDecision(aiTestResults: TestResults, businessContext: BusinessContext): ReleaseDecision {

// AI says: 3 failing tests (2 UI, 1 API)

// Human must consider:

const isBlackFriday = businessContext.date === '2026-11-27';

const affectsCheckout = aiTestResults.failures.some((f) => f.area === 'checkout');

const hasSafeRollback = businessContext.canRollback;

const revenueAtRisk = businessContext.dailyRevenue;

if (affectsCheckout && isBlackFriday) {

// Business context: Don't risk $500k revenue day

return {

goNoGo: 'NO_GO',

reasoning: 'Checkout issues on Black Friday = unacceptable business risk',

mitigations: ['Fix checkout bug first', 'Deploy Monday after holiday weekend', 'Add extra monitoring'],

};

} else if (!affectsCheckout && hasSafeRollback) {

// Technical risk is acceptable

return {

goNoGo: 'GO',

reasoning: 'UI bugs are low severity, safe rollback available',

mitigations: ['Deploy during low-traffic window', 'Monitor error rates closely', 'Fix UI bugs in next patch'],

};

}

// AI can't make this nuanced judgment call

}

Future-Proofing Your QA Career

Skills to Develop Now

| Skill | Why It Matters | How to Learn |

|---|---|---|

| AI Tool Proficiency | Work with AI, not against it | Use ChatGPT/Copilot daily, learn AI-assisted QA test generation |

| Quality Architecture | Design testable systems | Learn design patterns, observability, testing strategies |

| Risk Assessment | Prioritize testing efforts | Study failure modes, business impact analysis |

| Communication | Influence product decisions | Practice writing RFCs, presenting to stakeholders |

| Product Thinking | Understand user needs | Shadow customers, do user interviews |

| Coding Skills | Customize AI tools | Learn TypeScript, Python, CI/CD pipelines |

| Data Analysis | Interpret test metrics | Learn SQL, data visualization, statistical basics |

The "AI + Human" Testing Workflow

graph TB

A[New Feature] --> B{QA Strategic Review}

B --> C[Define Test Strategy]

C --> D[AI: Generate Test Cases]

D --> E[Human: Review & Augment]

E --> F[AI: Execute Tests]

F --> G{Tests Pass?}

G -->|Yes| H[Human: Exploratory Testing]

G -->|No| I[AI: Categorize Failures]

I --> J{Critical?}

J -->|Yes| K[Human: Deep Investigation]

J -->|No| L[AI: Auto-create Tickets]

H --> M[Human: Sign-off Decision]

K --> M

L --> M

M --> N{Ship?}

N -->|Yes| O[Deploy]

N -->|No| P[More Testing]

style B fill:#bbdefb

style C fill:#bbdefb

style E fill:#bbdefb

style H fill:#bbdefb

style K fill:#bbdefb

style M fill:#bbdefb

Real Talk: Job Market Predictions

Short Term (2026-2028)

- Manual-only QA roles: Declining rapidly (-40%)

- Automation QA roles: Shifting to "AI-assisted automation" (stable, +10%)

- QA Engineer (modern): Growing (+30%)

- Quality Strategist/SDET: High demand (+50%)

Long Term (2029-2035)

- Pure manual testing: Nearly extinct except specialized domains

- Test code writing: 80% AI-generated, 20% human review

- QA as strategic role: Core to product development

- New title: "Quality Architect" or "Testing Strategist"

What To Do Right Now

If you're a manual tester:

- ✅ Learn test automation basics (Playwright, Cypress)

- ✅ Use AI coding assistants daily (get comfortable)

- ✅ Develop product/business understanding

- ✅ Practice exploratory testing (uniquely human skill)

If you're an automation engineer:

- ✅ Master AI-powered testing tools

- ✅ Learn ML basics (understand what AI can/can't do)

- ✅ Develop strategic thinking skills

- ✅ Build influence skills (presentations, writing)

If you're a QA lead/manager:

- ✅ Redefine QA job descriptions (emphasize strategy)

- ✅ Invest in AI tool training for team

- ✅ Measure outcome metrics (bugs escaped, deployment frequency)

- ✅ Position QA as product quality partners, not gatekeepers

Conclusion

Will AI replace QA engineers? No—but it will fundamentally reshape what QA engineers do.

The future QA engineer:

- Spends less time: Writing repetitive tests, clicking through apps, filing obvious bugs

- Spends more time: Defining quality standards, assessing risk, exploratory testing, strategic planning

The key insight: AI automates the execution of quality assurance. Humans still define what quality means.

Your value shifts from being "the person who runs tests" to "the person who ensures the product is actually good for users and the business."

Those who adapt will find their skills more valuable than ever. Those who resist will struggle.

The choice is yours.

Related articles: Also see the current state of AI in automated testing, how the SDET role is evolving alongside AI-driven automation, and autonomous agents that are reshaping day-to-day QA work.

Ready to embrace the AI-powered future of QA? Sign up for ScanlyApp and start using AI-assisted testing tools that make you a more strategic, valuable QA professional.